Response surface models

Instantly predict and improve complex design behavior while saving time and computational resources.

Estimate errors, describe complex problems and validate data

Complex design assessments often require time-consuming, expensive simulations. When physics-based simulations are too costly, response surface models (RSM) provide a continuous analytical map of the design space. Reveal variable interactions and their sensitivities on key responses, enabling rapid evaluation of design alternatives. Our RSM tool allows you to quickly build, validate, and optimize effective meta-models from existing datasets.

What are response surface models?

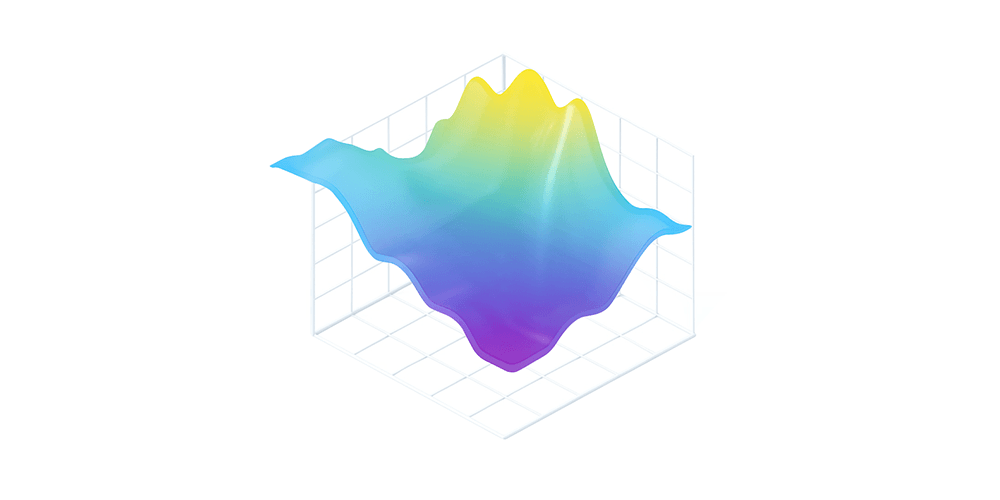

Response surface methodology employs statistical and numerical models to accurately map the input/output behavior of complex systems. By leveraging a dataset of discrete designs, an RSM algorithm reconstructs the structure of the unknown underlying function. This approximation is built on specific assumptions regarding the response surface, such as its smoothness (regularity), physical consistency, or statistical distribution.

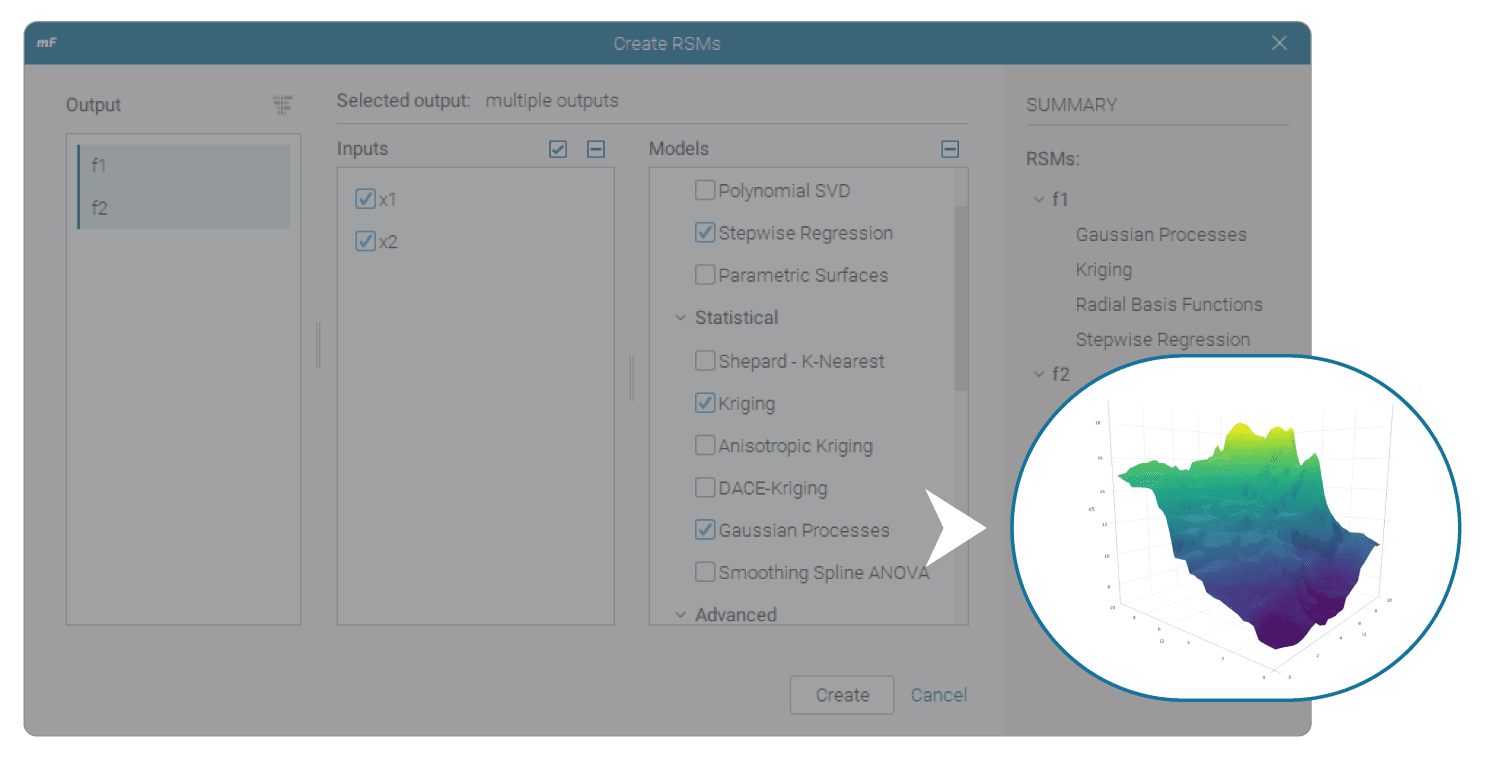

RSM training in modeFRONTIER

With modeFRONTIER RSM technology you can:

- Streamline metamodel creation

Select datasets, variables, and algorithms within a single, unified interface. - Ensure model accuracy

Use our validation tool to estimate model accuracy with at-a-glance insights. - Prevent prediction errors

Avoid overfitting or underfitting to maintain model reliability. - Perform RSM-based optimization

Use surrogates for heavy simulation processes to fast-run traditional optimization processes. - Export and integrate

Save models as functional mock-up units (FMUs) for integration with third-party platforms, or export as Python code or Excel sheets for external production environments.

Leverage modeFRONTIER to predict designs faster

Learn moreChoose the most suitable RSM algorithm

ESTECO provides a rich set of algorithms to help engineers and researchers build surrogate models and predict system behavior across a wide range of operating conditions.

Traditional statistical methods

Model the relationships between input variables and output responses, typically through polynomial functions.

- Polynomial Regression

An extension of linear regression that models the relationship between a dependent variable and one or more independent variables using an n-th degree polynomial. - Stepwise Regression

Efficiently handles large sets of candidate predictor terms by selecting a smaller subset. This creates a simpler model that balances predictive power with protection against overfitting and underfitting.

Metamodeling techniques

Create surrogate models to approximate complex systems, offering high accuracy with limited data points.

- Kriging

A Bayesian methodology originally developed for geostatistics. It’s highly effective for predicting variables like soil permeability or mineral extraction. - Anisotropic Kriging

A refined Kriging RSM method that allows users to control the relative importance of input variables. - DACE Kriging

A hybrid technique that approximates expensive, complex, high-dimensional simulation models by combining a global regression trend with a local Kriging deviation. - Gaussian Process

A powerful regression method based on Bayesian inference. Gaussian Processes offer a probabilistic interpretation, characterizing each prediction with a Gaussian probability distribution defined by its mean and variance. - Smoothing Spline ANOVA (SS-ANNOVA)

A non-parametric smoothing method suitable for both univariate and multivariate problems, assuming Gaussian-type responses. - Radial Basis Functions (RBF)

A powerful meshless tool for multivariate scattered data interpolation. Because RBFs pass exactly through training points, they’re ideal for high-accuracy, noise-free problems.

Machine learning (ML) methods

Identify patterns in data, to solve non-linear relationships and high-dimensional problems.

- Neural Networks

Statistical learning models inspired by biological brain structure and functions. They’re used to estimate functions that depend on a large number of inputs. - Evolutionary Design

An interpolating RSM method that uses genetic programming to perform symbolic regression. - AutoML

Automates the machine learning workflow by training and tuning multiple models within a user-defined time limit. - Deep learning

Uses multi-layer feedforward artificial neural network trained with stochastic gradient descent using back-propagation. - Distributed random forest

A robust classification and regression tool used in modeFRONTIER for both RSM training and sensitivity analysis. - Gradient boosting machine (GBM)

A forward-learning ensemble method that achieves predictive results through increasingly refined approximations. - Support vector regression (SVR)

A regression model that uses support vector machines (SVMs) to analyze data, recognize patterns and perform complex regressions.

Specialized techniques

Advanced approaches for specific modeling scenarios, such as combining data from different fidelity levels or interpolating scattered data points.

- Shepard’s Method

A statistical interpolation RSM method that predicts the values of unknown target points using a weighted average based on the inverse mutual distance between the known points and the target point. - Parametric Surfaces

An efficient and powerful RSM training method for multivariate interpolation of scattered data, designed to find the optimal parameters for any parametric algebraic function. - Multi-Fidelity RSM

A simulation technique that combines high-fidelity data with low-fidelity data. This creates an accurate system approximation while significantly reducing computational costs.

- Polynomial Regression

An extension of linear regression that models the relationship between a dependent variable and one or more independent variables using an n-th degree polynomial. - Stepwise Regression

Efficiently handles large sets of candidate predictor terms by selecting a smaller subset. This creates a simpler model that balances predictive power with protection against overfitting and underfitting.

- Kriging

A Bayesian methodology originally developed for geostatistics. It’s highly effective for predicting variables like soil permeability or mineral extraction. - Anisotropic Kriging

A refined Kriging RSM method that allows users to control the relative importance of input variables. - DACE Kriging

A hybrid technique that approximates expensive, complex, high-dimensional simulation models by combining a global regression trend with a local Kriging deviation. - Gaussian Process

A powerful regression method based on Bayesian inference. Gaussian Processes offer a probabilistic interpretation, characterizing each prediction with a Gaussian probability distribution defined by its mean and variance. - Smoothing Spline ANOVA (SS-ANNOVA)

A non-parametric smoothing method suitable for both univariate and multivariate problems, assuming Gaussian-type responses. - Radial Basis Functions (RBF)

A powerful meshless tool for multivariate scattered data interpolation. Because RBFs pass exactly through training points, they’re ideal for high-accuracy, noise-free problems.

- Neural Networks

Statistical learning models inspired by biological brain structure and functions. They’re used to estimate functions that depend on a large number of inputs. - Evolutionary Design

An interpolating RSM method that uses genetic programming to perform symbolic regression. - AutoML

Automates the machine learning workflow by training and tuning multiple models within a user-defined time limit. - Deep learning

Uses multi-layer feedforward artificial neural network trained with stochastic gradient descent using back-propagation. - Distributed random forest

A robust classification and regression tool used in modeFRONTIER for both RSM training and sensitivity analysis. - Gradient boosting machine (GBM)

A forward-learning ensemble method that achieves predictive results through increasingly refined approximations. - Support vector regression (SVR)

A regression model that uses support vector machines (SVMs) to analyze data, recognize patterns and perform complex regressions.

- Shepard’s Method

A statistical interpolation RSM method that predicts the values of unknown target points using a weighted average based on the inverse mutual distance between the known points and the target point. - Parametric Surfaces

An efficient and powerful RSM training method for multivariate interpolation of scattered data, designed to find the optimal parameters for any parametric algebraic function. - Multi-Fidelity RSM

A simulation technique that combines high-fidelity data with low-fidelity data. This creates an accurate system approximation while significantly reducing computational costs.

Fundamentals of response surface modeling

This webinar explains how to create a response surface or mathematical model that can be used to predict the results of a new set of experiments, without having to execute those experiments.

How our customers use response surface models

Our customers leverage our extensive library of algorithms to build reliable metamodels, saving significant time and computational resources. By integrating RSM-based optimization into their design processes, they consistently improve product performance metrics.

"modeFRONTIER is a proven software solution for RSMs with a long record of success. It empowers engineers to move beyond automated settings, combining rigorous validation with human expertise to ensure model accuracy. By using high-fidelity fittings to 'anchor' low-fidelity models, users can strategically expand their design scenarios with confidence."

"modeFRONTIER drastically reduced the time required to calibrate GT models. We used response surfaces and direct optimization algorithms — NSGA and Hybrid —- to find the best values for 12 output parameters, measuring the exhaust and intake cam timing angles and volumetric efficiency."

"modeFRONTIER automatically generates designs and RSMs, iteratively evaluates the accuracy levels and completes the process once target model accuracy is reached."

Key challenges of response surface models

Optimizing expensive high-fidelity simulations

Balancing the need for a detailed model with a limited budget for physical experiments or high-fidelity simulations.

Selecting the proper model order

Low-order RSMs may miss complex curvatures, while high-order models risk "chasing" noise. Both scenarios can degrade predictive power.

Navigating non-linearity landscapes

Traditional RSMs can struggle with non-smooth landscapes, where discontinuities and local optima (false peaks) can lead to sub-optimal solutions.

Benefits of response surface models

Gain instant, accurate insight into the relationship between your design parameters and performance objectives.

Efficient data-driven meta-modeling

Leverage design of experiments (DOE) to map the design space with minimal sampling, significantly reducing the cost and time of physical testing.

Targeted design exploration

Use adaptive space filler (ASF) algorithms to add points to the design space to train and improve accuracy based on your custom criteria.

Rapid large-scale evaluation

Replace expensive, high-fidelity simulations with fast mathematical approximations, enabling rapid what-if analysis and global optimization in seconds rather than days.

Strategic resource optimization

Focus high-fidelity simulation only where necessary. Use surrogate models to fill the gaps and maximize your "information-per-calculation" ratio.

High accuracy predictions

Use our extensive library of ML algorithms to build reliable RSM models that accurately analyze and simulate complex, real world systems.

Actionable data insights

Transform datasets into functional mathematical models that can be used for continuous design improvement.

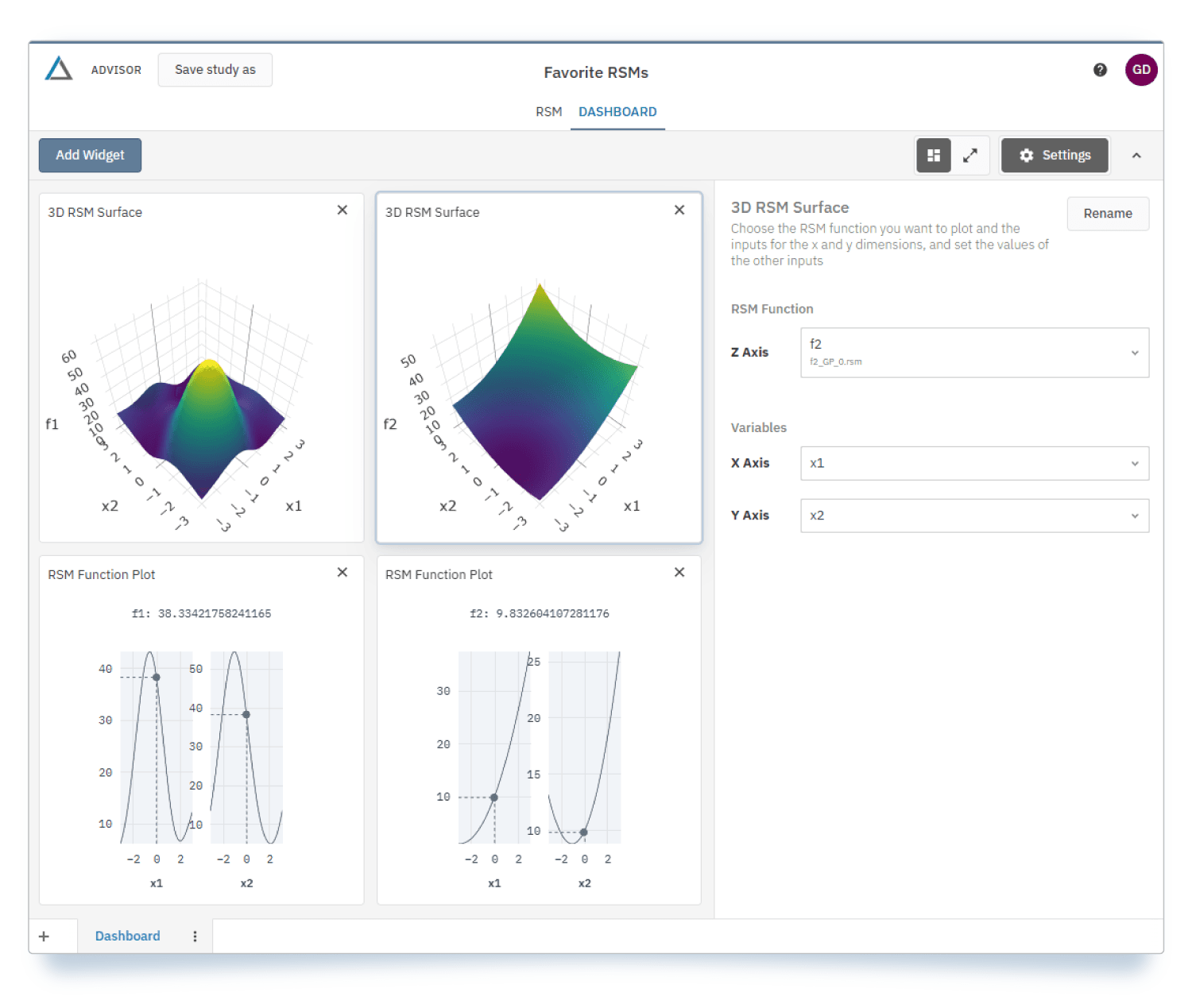

Leverage VOLTA to democratize design space exploration

While RSM training occurs in modeFRONTIER, the VOLTA digital engineering platform provides the deployment environment for cross-team collaboration:

- Import, visualize, and run pre-trained response surface models.

- Securely share trained models across teams in a controlled, centralized environment.

- Enable teams to perform RSM-based optimization runs using high-fidelity surrogate models.

Scale and democratize MDO workflows with VOLTA

Learn moreFrequently asked questions

Quick answers to questions you may have.

Response surface modeling (RSM) replaces expensive simulations with fast mathematical approximations. By running a solver only for a limited set of points defined by an intelligent design of experiments (DOE), you avoid the inefficiency of random sampling.

The resulting surrogate model predicts outputs for new inputs almost instantly. During optimization, the algorithm evaluates thousands of designs using the surrogate instead of the real solver. You then validate only the best candidates with the real solver, drastically reducing total solver runs and computational costs.

Yes. In modeFRONTIER, RSM builds surrogate models for each objective to learn how inputs affect different outputs. The multi-objective optimizer then runs on these surrogates to evaluate thousands of alternatives in seconds. This process produces a Pareto front, allowing you to explore trade-offs without the overhead of traditional solvers.

You can compare predictions against independent validation samples — points not used to build the model — to determine real accuracy. By reviewing error metrics like RMSE or maximum error and inspecting response surface plots for oscillations, you can identify poor sampling or overfitting. Refine the DOE if needed, add targeted samples to improve accuracy without restarting the workflow. This iterative approach allows you to build, check and improve until you are confident of your results.

If the response surface is inaccurate, you can refine it step-by-step in modeFRONTIER rather than starting over.

You detect issues early through validation errors or poor predictions, which signals where the model struggles, avoiding blind optimization.

Next, you add new samples in critical regions. Targeted DOE improves accuracy without many extra solver runs.

By adding new samples in these critical regions, you improve accuracy through a targeted DOE without requiring a high number of extra solver runs.

If needed, you switch to a different surrogate model —for example, Kriging often performs better than polynomials for non-linear behavior.

Then, you rebuild and revalidate the response surface. This iterative loop of building and revalidating continues until errors drop to an acceptable level.

Then, you validate key designs with the real solver. This protects decision quality, while keeping computational costs under control.

Yes, response surface modeling (RSM) enables real-time what-if analysis within the ESTECO technology ecosystem, particularly in modeFRONTIER.

Once you build a response surface from a limited set of solver runs, the resulting surrogate model evaluates new inputs almost instantly. This allows you to change parameters and visualize results in real time, making interactive exploration possible without launching new simulations.

By validating the most critical scenarios with the real solver, you keep decisions reliable and exploration fast, turning slow simulations into real-time insight tools.

ESTECO technology lets you decide which RSM model to use based on problem behavior, data size, and accuracy needs.

Start by looking at response behavior. If trends are smooth, a polynomial model is often enough. For highly nonlinear or irregular responses, Kriging or RBF models adapt better to local effects and improve accuracy. You should also consider your sample size. Simple models require fewer points while advanced ones perform better with more data. By using ESTECO tools to check validation errors and compare models side-by-side, you can follow a reliable, data-driven approach: start simple, validate, and switch models if errors stay high.

A common approach works well:

- Start simple

- Validate

- Switch models if errors stay high

Experience VOLTA and modeFRONTIER software

Whether you have a question about our products, licensing options or pricing, our solution experts are here to assist you.